There are many use cases where you need to do certain actions based on database changes. For example, each time a new user is added to your database you want to send an automated email to the user. This is a perfect example of the usage of DynamoDB Streams.

DynamoDB Streams can trigger AWS Lambda functions for each database change. For example, if you want to trigger a function once a new user is added to your user table you can enable a DynamoDB Stream. A lambda function will automatically be triggered with the new user.

In this post, we'll show you how to activate streams, which event it will trigger, and show you a common example.

Let's go.

Creating Streams

You can create a stream with any Infrastructure as Code tool (Terraform, CDK, CloudFormation) or with the API or CLI. In this post, we will show you how to create streams with the Management Console.

Enable Streams

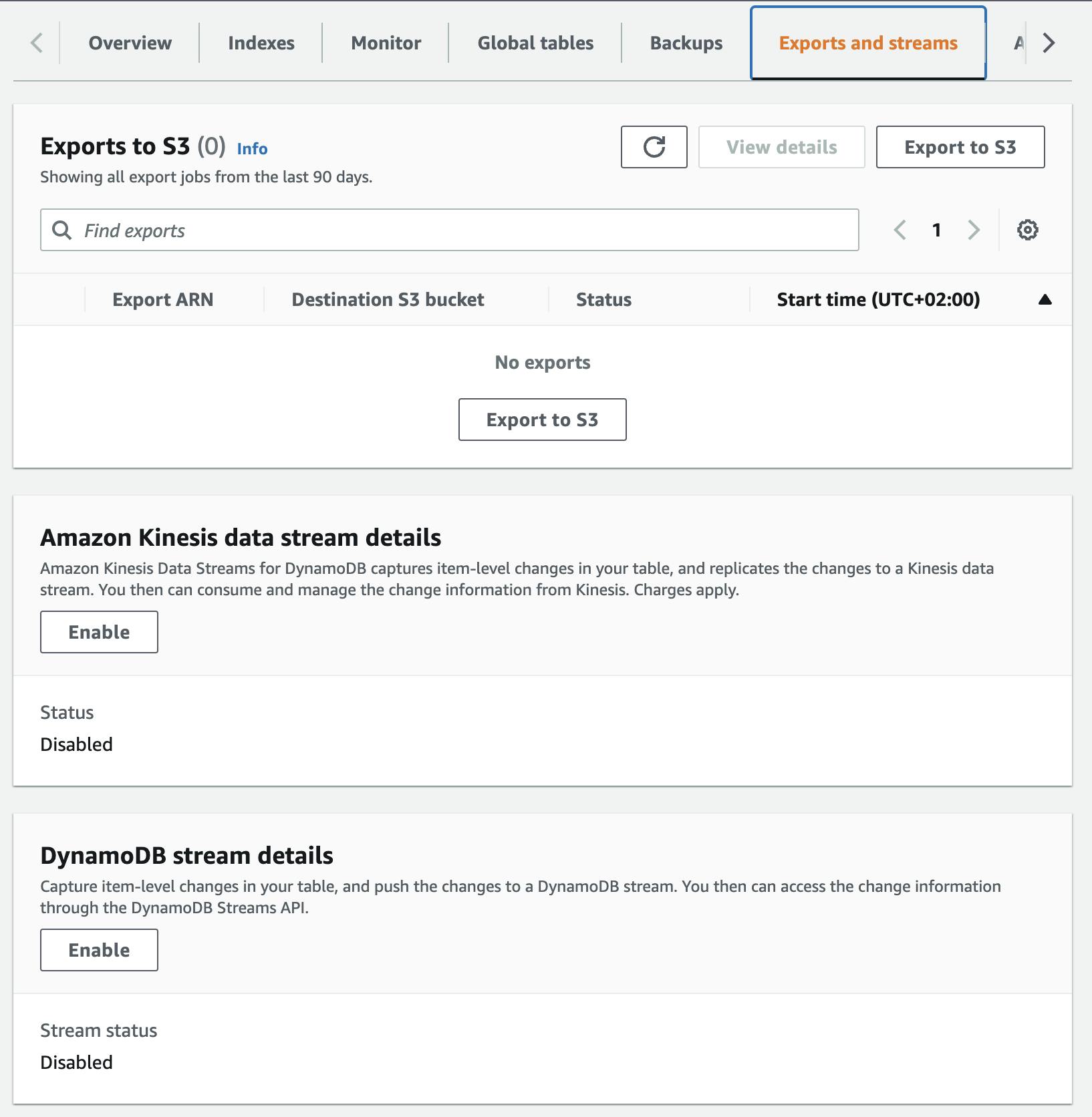

You first need to enable streams in the DynamoDB console:

Go to your table settings

Head over to the tab Exports and streams

In the card, DynamoDB stream details click on Enable

Define which data you want to consume in your lambda function

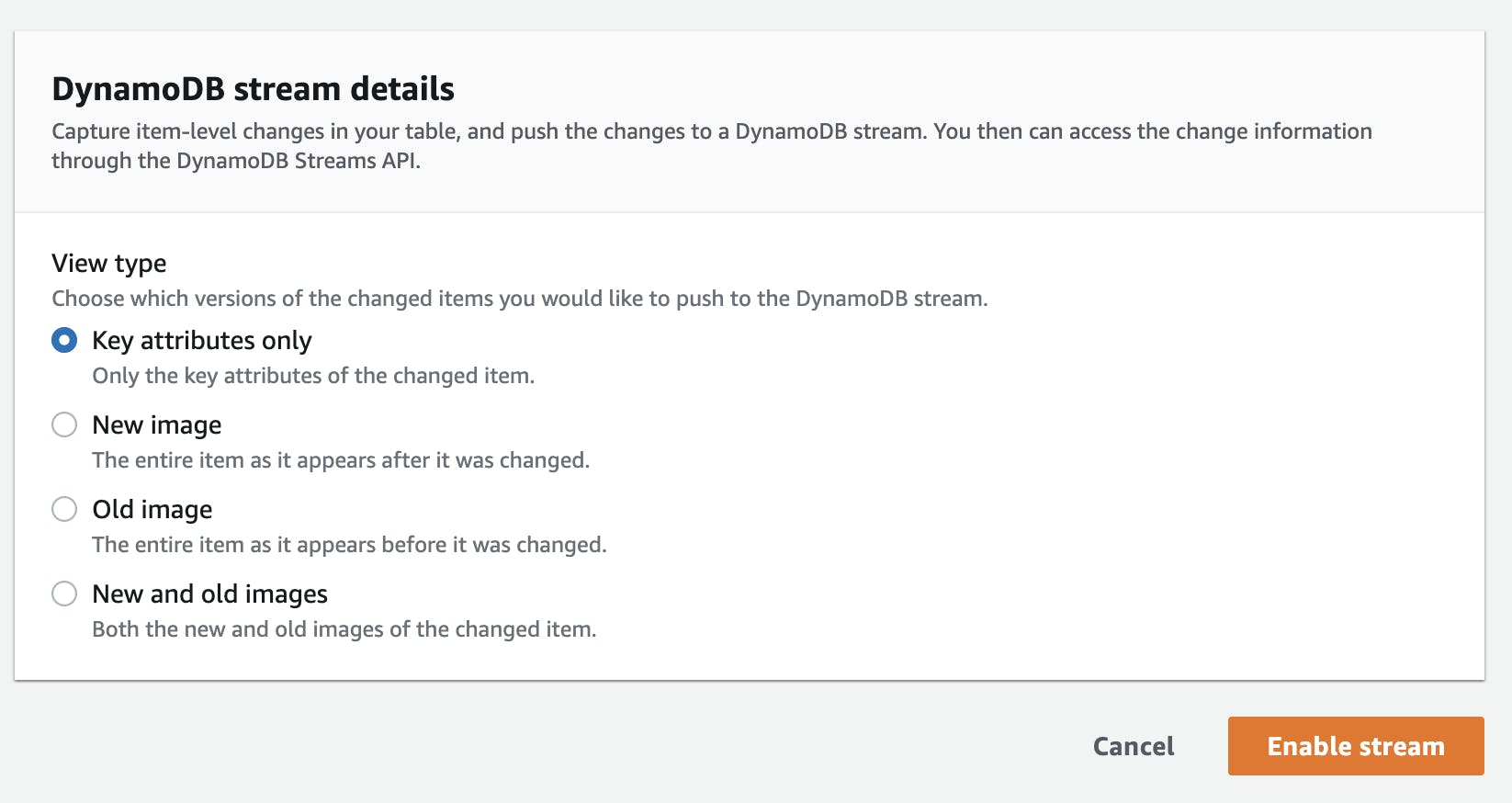

There are different options to choose from:

| Type | Description | Example when inserting a new user |

| Key attributes only | Only the key of the changed item | userId |

| New Image | The whole new inserted item after it was changed. | The whole user object |

| Old Image | The image before it was changed. | For adding a new user that doesn’t exist because it is defined as “before it was changed”. |

| New and old images | Both the whole new object after it was changed and the object before it was changed. | For inserting a new user this would be the completely new user. |

For our example of getting new users, it is enough to choose New Image. With that, we always get the whole user object in the lambda function.

Streams are enabled now ✅

Create a Trigger

Now we need to create a trigger. This connects the DynamoDB Stream to the Lambda function.

Go to your table settings

Head over to the tab Exports and streams

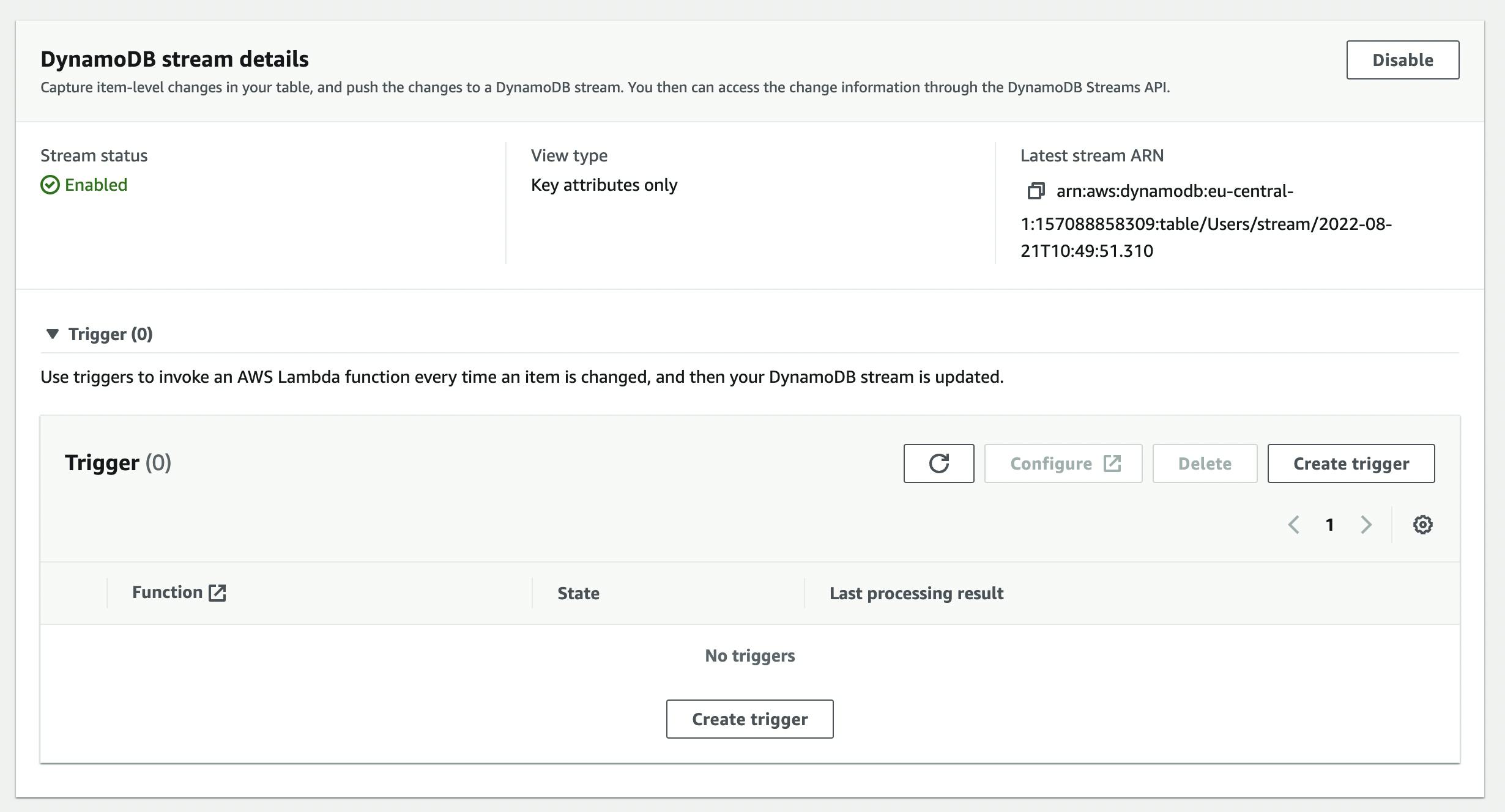

After enabling your streams you should now see a window with **Triggers**

Click on Create Trigger to create a new trigger

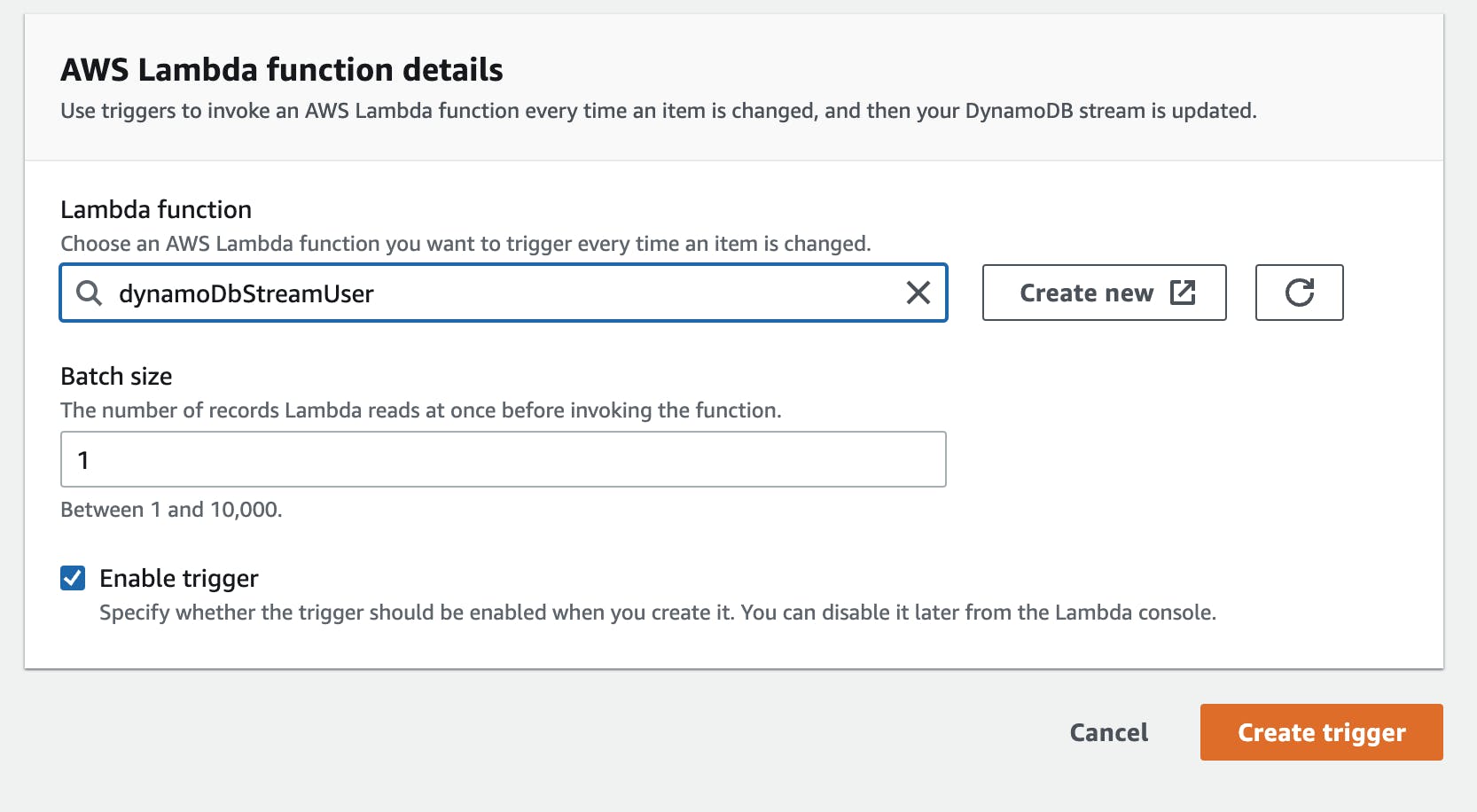

In this window, you need to define your lambda function. Either select one you already have or Create a new one. You can also choose a batch size of how many events your lambda should handle. We leave it at one for now.

Make sure your lambda function is allowed to do the following actions:

`GetRecord`

`GetShardIterator `

`DescribeStream `

`ListStreams `

{

"Effect": "Allow",

"Action": [

"dynamodb:DescribeStream",

"dynamodb:GetRecords",

"dynamodb:GetShardIterator",

"dynamodb:ListStreams"

],

"Resource": "STREAM_ARN"

}

Your Stream ARN looks something like that: arn:aws:dynamodb:eu-central-1:<ACCOUNT_ID>:table/Users/stream/2022-08-21T10:49:51.310

Please add a star behind the stream part so it should look like that: arn:aws:dynamodb:eu-central-1:<ACCOUNT_ID>:table/Users/stream/*

Lambda Function

Your Lambda will now be triggered for every insert, update, and deletion in your DynamoDB Table. Let's head over to the lambda service and work on the function.

So let's go to the lambda function and add the code

exports.handler = async (event) => {

console.info("EVENT\n" + JSON.stringify(event, null, 2))

// TODO implement

const response = {

statusCode: 200,

body: JSON.stringify('Hello from Lambda!'),

};

return response;

};

After that, we head over to the DynamoDB table and insert an item.

Tip ☝️: You can also head over to this website and check out the event. OR generate a test event within the AWS Lambda console.

{

"Records": [

{

"eventID": "d888c8818651dc51d98b21f921be01fd",

"eventName": "INSERT",

"eventVersion": "1.1",

"eventSource": "aws:dynamodb",

"awsRegion": "eu-central-1",

"dynamodb": {

"ApproximateCreationDateTime": 1661096164,

"Keys": {

"createdAt": {

"S": "2022-08-20"

},

"userId": {

"S": "user_2"

}

},

"NewImage": {

"createdAt": {

"S": "2022-08-20"

},

"firstname": {

"S": "Sandro"

},

"ttl": {

"N": "1661166039"

},

"userId": {

"S": "user_2"

},

"lastname": {

"S": "Volpicella"

}

},

"SequenceNumber": "26163800000000026199463306",

"SizeBytes": 104,

"StreamViewType": "NEW_IMAGE"

},

"eventSourceARN": "arn:aws:dynamodb:eu-central-1:ACCOUNT:table/Users/stream/2022-08-21T15:34:25.720"

}

]

}

Now you will see the event that is coming in. You will always get a Records array. Depending on your batch size more entries can be in there.

In the records, you can see your item in the dynamodb object. The most two important parts of that object are:

Keys: These are the keys of the new itemNewImage: The new user object

We can now use the NewImage and do something with it.

Considerations

Asynchronous Call

It is important to understand that your lambda will be called in an asynchronous fashion. That means you can use lambda destinations like onSuccess or onFailure to handle errors more gracefully and attach a Dead-Letter-Queue for example.

Idempotency

A DynamoDB stream call will be executed at least once. That means your lambda function can be invoked with the same event multiple times. You need to ensure that your call is idempotent. That means your operation is idempotent or you have a layer in-between to check that your function is only executed once. A good example can be seen in the AWS Lambda Powertools for Python.

Pricing

Streams are priced the following (US-east-1 example):

The first 2.5 million DynamoDB Stream read requests are free

After that each request costs $0.02 per 100k DynamoDB stream read units

Check out the DynamoDB pricing guide for more info.

Resources

Example implementation of Streams

Best practices and design patterns

Developer Guide for DynamoDB Streams

Final Words

That's it!

If you want to learn more about the fundamentals of AWS make sure to subscribe to our newsletter at awsfundamentals.com